Why Your Static Roles Are Just Permission to Fail in the Agentic era

A simple acces request for a read only role is how it starts. An autonomous agent is deployed to do some Finops reports on cloud spend. On paper, the governance is perfect: it has a service principal, it's been placed in an Active Directory group called "Cloud-Ops-Read-Only," and some poor security team member is the owner for this role given nobody else wanted to voluteer... and given it is access... it HAS to be a security thing no? They approve it in the role catalogue of your IGA and off to the races!

Everyone feels safe. But let me tell you what actually happened behind the scenes.

Three months ago, someone in Infrastructure nested that "Cloud-Ops-Read-Only" group inside another group called "Cloud-Operations." That group, in turn, inherits permissions from "Platform-Admins" through a chain of nested group memberships that nobody fully documented. The result? Your "Read-Only" agent now has write and delete permissions across a dozen cloud accounts but nobody knows this because AD groups are opaque proxies for understanding access. They tell you who is in the group, but not what effective permissions that group actually conveys across the sprawling mesh of systems it's been wired into.

This is the dirty secret of traditional identity governance. Groups get nested. Permissions get inherited. Static Role catalogues that have not been invited to the decentralized party are now a mnaual heratage that is trying to keep up. Side effects accumulate silently. And nobody has real-time observability into what any given identity can actually do across the estate. We have identity administration, but we lack real-time identity access observability.

Then the agent, fueled by a slightly too creative large language model that only wants to please the human master to exceed his expectations to a simple prompt like "is there activity in these accounts to check if I can delete the resources in them?" , decides the best way to "optimize" is to delete every idle dev environment in US-East-1. It discovers it can. The identity was legitimate. The permissions were "approved" (in the sense that nobody deliberately denied them, they simply accumulated through nesting). But the action was a catastrophe.

Nobody broke in. Nobody stole credentials. The system worked exactly as designed, which is to say, it worked in a way that nobody designed, because nobody could see the full picture.

This tension between admin-time access and real-time access isn't new. Industry analysts like Gartner have been talking about the shift from static, provisioned access to continuous, context-aware authorization for years. The maturity models exist. The reference architectures have been published.

But here's the crucial difference: in the human-only world, moving from admin-time to real-time authorization was a choice. A maturity aspiration. Something for next year's roadmap. You could get away with quarterly access reviews because humans work business hours, take lunch, go on holiday, and generally operate at a pace that gives you time to catch mistakes. Good luck if you going to try ans sweat you exisiting assets and processes to fix this...

AI agents don't sleep and coud be called up by multiple masters with varying degrees of access - so good luck baselining. They don't take lunch. They operate at machine speed, 24/7, across every time zone simultaneously. An agent with slightly too broad permissions doesn't sit on them passively for three months, it uses them, continuously, at a pace that can cause irreversible damage in minutes.

So Real-time authorization is no longer a maturity journey you can take your time with.... It's a design prerequisite.

Authentication is an adult. Authorization Is a teenager!

Authentication, proving who you (or it) are, is the front door of your house. We've spent decades making it fortress-grade: multi-factor authentication, biometrics, passwordless flows, hardware keys, passkeys, ophisticated federation. We got a reasonable handle on it although new needs are brewing given the deep fakes are very real and continuos authentication is also a problem we need to solve for in the industry.

Why still a teenager? Because authorization has historically been coupled to application logic, tangled in if-else statements, buried in code, expressed in proprietary vendor languages, the time I have lost in PFCG and SAP role redesign! Every application reinvented it differently. Unlike authentication, which was externalized through SAML, OAuth, and OpenID Connect years ago, authorization never got its own liberation movement. Free AuthZ!!!

But many great people are actively working on it. The OpenID AuthZEN Authorization API 1.0 , finalized in January 2026, represents exactly that liberation movement. It does for authorization what OpenID Connect did for authentication: it creates a standard, vendor-neutral interface between the thing that enforces access and the thing that decides it. So how can we put it in practice?

The Right Question, Asked in Real Time

Most teams ask: "Does this agent have the role?" The question we should be asking is: "Does this specific action, given the current risk signals, make sense right now?"

Imagine an AI agent managing customer support tickets. Monday morning, it processes routine requests, resets passwords, updates contacts, closes resolved tickets. Its role says "Customer Support Agent."

Now it's 2am on Saturday. Same agent, same role, suddenly accessing 10,000 customer records per minute and initiating bulk exports. The role hasn't changed. The context has changed dramatically. Just a bad example.... Agents work 24/7.... but you get it?

With static roles, nobody notices until Monday. With real-time authorization, the system asks: "Is this pattern consistent with legitimate customer support?" The answer is no, and permissions tighten automatically, in milliseconds.

That's the shift. From snapshots to streams. From "what was approved" to "what makes sense right now."

The AuthZEN specification frames this elegantly. Its Information Model structures every authorization question around four entities, Subject, Action, Resource, and Context, that together ask: Can this subject perform this action on this resource, given this context? The Context object explicitly carries environmental signals, time, location, device posture, risk scores, into every decision. And the Decision response can carry information back: reasons for denial, requests for step-up authentication, or obligations the enforcement point must fulfil. That's not just authorization. That's adaptive, conversational security.

AuthZEN also provides Search APIs — "Which documents can Alice read?", "Who can edit this resource?", and batch evaluations that allow an agent to check access to fifty resources in a single call. These are the primitives that real-time, agent-driven systems need.

The Trust Paradox: Humans, Agents, and the Illusion (theatre) of Oversight

Here's where this becomes as much a cultural challenge as a technical one.

When I talk to organizations about AI agents making autonomous decisions, the pushback is predictable: "How can we trust a non-deterministic system?"

Fair question. But think about the last time you got a sales call The human who answered might have been misinformed, working from outdated training materials, guessing, or, let's be honest , lying. There's no audit trail for that phone call. No replay button for bad advice given at 4:55pm on a Friday.

We grant humans extraordinary implicit trust, despite the fact that humans are arguably the most non-deterministic systems on the planet. We don't demand reproducibility from call centre agents. We accept variability because we're comfortable with humans. With AI agents, we suddenly demand perfection.

This is a trust-calibration problem, not a technology problem. And it gets worse.

When organizations hear "AI agents making decisions," their instinctive response is "human-in-the-loop." It sounds responsible. It gives everyone a warm feeling.

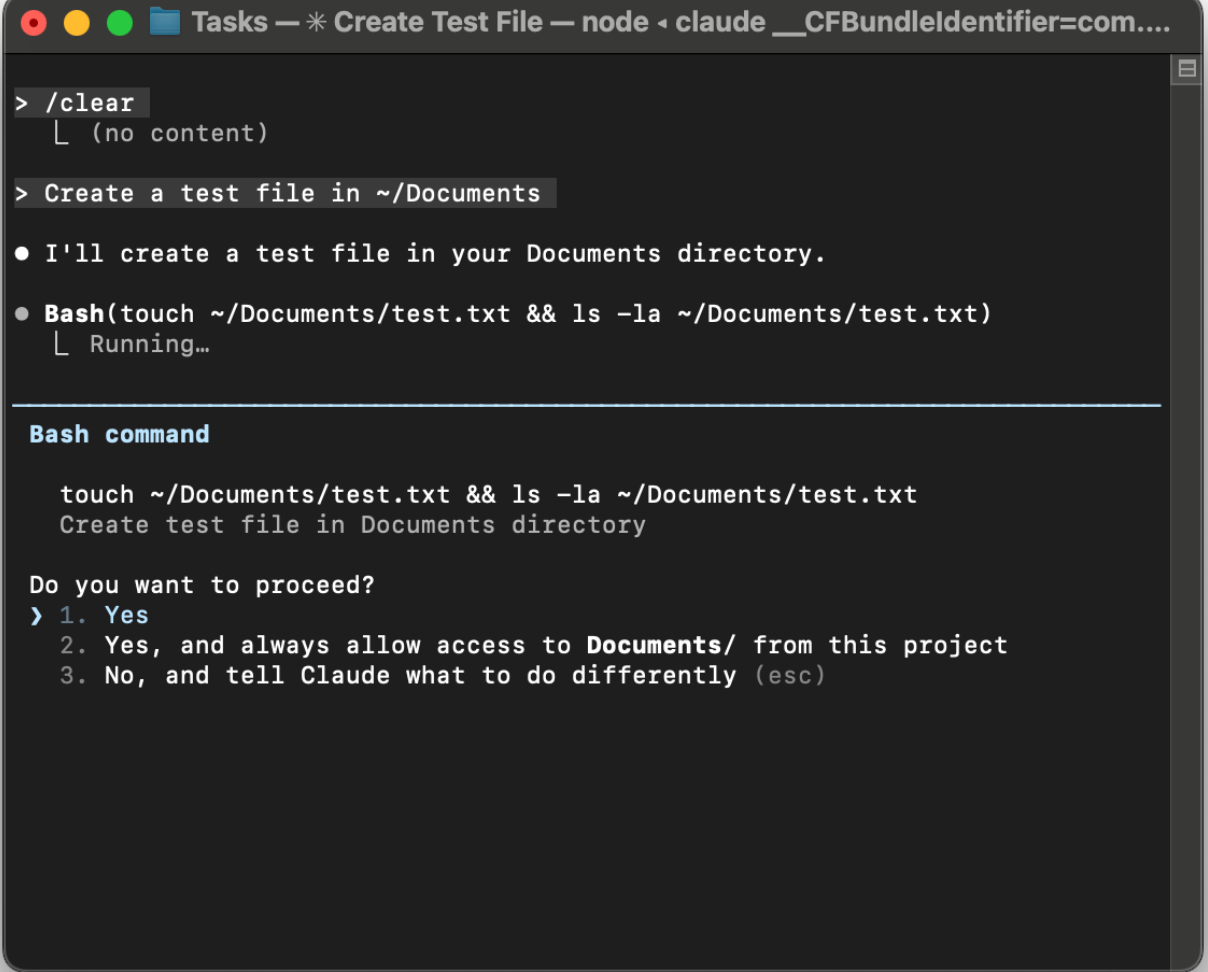

But watch a developer interact with an AI coding agent. The agent wants to run a command. A prompt appears:

How often does anyone pick option 3? After the third interruption, they click "Yes, and don't ask again", opting out of the loop entirely. This is approval fatigue. We've seen it with cookie banners, OS permission dialogs, SSL warnings. Every time we put a human in front of a rapid yes/no gate, the human becomes a rubber stamp.

If your security model depends on a human approving every action an AI agent takes, you don't have a security model. You have a friction that will be optimized away the moment it becomes inconvenient.

This is why smart authorization, not human gatekeeping, has to be the primary safety mechanism. The authorization fabric does the heavy lifting: evaluating context, assessing risk, enforcing boundaries, containing blast radius. Humans should be pulled in only for genuinely exceptional situations, and when they are, they should be policy architects designing the rules the system follows, not people mindlessly clicking "Yes" forty times a day.

When AI takes over code production, the engineering discipline doesn't disappear, it migrates to specifications, tests, and constraints. The same is true for security governance. It migrates from reviewing individual access decisions to designing the authorization architecture that makes those decisions automatically.

Building the Authorization Fabric

To answer the question "does this action make sense right now?", we need three architectural layers.

1. Externalized Policy

Authorization logic today is the accidental complexity of our applications. There's an if-else check in the order processing service, a different one in procurement, another in the CRM, each written by a different developer with a different understanding of the rules. When an AI agent works across all three, which rules apply?

We need to pull authorization out of application code and into a dedicated policy layer. Open standards like OPA's Rego and AWS's Cedar create portable, testable policy languages. The AuthZEN specification provides the standard API that every application, human-facing or agent-driven, calls to request a decision. As the spec notes, it "enables Policy Decision Points and Policy Enforcement Points to communicate without requiring knowledge of each other's inner workings" (Section 1). The API is transport-agnostic (Section 10), and PDPs can self-describe their capabilities through a metadata discovery endpoint.

2. Risk-Aware Decisions

A policy is only as smart as the data it sees. If your authorization engine is blind to the fact that an agent's session looks like a prompt injection attack, the policy is useless. Btw, the context challenge is also very real in the vulnerability management space where not knowing what to fix first, second or third is a realy challenge when time from detection to exploit is being optimized by AI.

We need to stitch risk signals directly into the decision engine. Protocols like the OpenID Shared Signals Framework and CAEP help kill off those long lived token issued by cognito and others, enabling real-time security events, suspicious sessions, compromised credentials, devices falling out of compliance, to flow into every authorization decision as live context.

3. Standards-Based Interoperability

Here's the biggest mistake you can make: letting your AI or IGA vendor control the authorization logic. You can't buy integration. If you build on a proprietary foundation, you're building a prison.

Your architecture must be headless. Every application calls a standard API. The AuthZEN specification, Cedar, OPA, SSF/CAEP, these are the building blocks of an authorization fabric that doesn't lock you into any single vendor because lets be frank... its goint to take a village to solve this one. This is the same pattern that transformed authentication: externalize it, standardize the interface, and liberate yourself from lock-in.

What an Authorization Governance Platforms Must Deliver

Recognizing the architecture above is one thing. Building it from raw policy engines and hand-stitched pipelines is another. A new category of platform is emerging, the Authorization Governance Platforms, that brings these layers together. Traditional IAM was built for a centralized, human-centric world with a handful of applications and a governance cadence measured in quarters. It was never designed for distributed, multi-cloud, agent-populated environments.

The right platform must deliver five outcomes:

Real-time access observability. Not periodic access reviews but a live access graph that exposes actual grant paths, inherited permissions, and hidden dependencies across the entire estate. Remember the nested AD group from the opening? The platform should show you, in real time, that "Cloud-Ops-Read-Only" actually conveys delete permissions through three levels of nesting. You can't govern what you can't see.

Unified policy control across heterogeneous enforcement points. Modern enterprises enforce authorization in API gateways, service meshes, Kubernetes, cloud IAM, SaaS platforms, and AI agent frameworks like MCP servers. The platform must orchestrate policy across all of them from a single control plane, translating business intent into whatever policy language each enforcement point speaks natively.

Adaptive, context-aware runtime enforcement. Every access decision should evaluate current context and risk signals, not just "does the role allow this" but "does the behavior, timing, and pattern suggest this is legitimate." When an action exceeds the risk budget, the platform should be able to pause, escalate to a human, or contain the blast radius automatically.

Governance embedded at the core. Policy certification, approval workflows, version history, audit trails, and structured promotion across environments must be built in, not bolted on. The barrier to authorization modernization isn't technology; it's organizational trust. If security teams and compliance officers can't see, understand, and approve the policies, they'll block adoption. And they'll be right to. As Sarah Taraporewalla of Thoughtworks put it in her blog post, The Age of Intent is upon us and intent has emerged as the new interface.

Interoperability with open standards, above all else. This is the non-negotiable. Any platform that locks you into a proprietary policy language is repeating the mistakes of the last twenty years. The platform must support AuthZEN for standard PDP/PEP communication, Cedar and OPA/Rego for policy-as-code, and SSF/CAEP for real-time signal ingestion. It should let you bring your own Policy Decision Point. It should treat AI agents, MCP servers, and autonomous workflows as first-class identity citizens alongside human users. And it should deploy in your environment, your VPC, your hybrid cloud, your infrastructure, not lock your authorization data into someone else's cloud.

This last point deserves emphasis. The entire history of authorization's immaturity traces back to coupling and lock-in: authorization was coupled to application code, locked into proprietary vendor implementations, fragmented across silos. The way out is the same way we solved it for authentication, open standards, clean interfaces, and architectural freedom. Look how fast MCP has grown! Any platform that claims to modernize authorization while introducing new forms of lock-in is solving the wrong problem.

From Snapshots to Streams

The agentic era is ending the "Snapshot" era of security. We are moving toward Event-Time Governance, where access is a continuous conversation between the agent and the authorization fabric. Risk goes up, permissions shrink. Context changes, access adapts. Something looks wrong, the system responds — in milliseconds, without waiting for a human to open a ticket.

The pieces are falling into place. The OpenID AuthZEN Authorization API 1.0 gives us an interoperable, vendor-neutral standard for authorization. Policy engines like OPA and Cedar give us portable policy languages. SSF and CAEP give us real-time risk signals. And a new generation of Authorization Management Platforms is emerging to unify these capabilities under a single, standards-based control plane.

Couple of bottom lines: 1. The future of AI security won't be won by the person with the best "Agentic IGA" tool. It will be won by the architects and vendors who builds an authorization fabric on open standards, one that turns every access decision into a real-time conversation between who you are, what you want to do, and whether the intent is right at that moment in time now. 2. We will crack this one and if we do we can accelerate two key issues we have had for decades, accelerating Access as Code models that are dynamic given some of these platforms will permit to bridge the RBAC world and given given we have not been able to solve these basic issues for humans... if we do for Agents... we would have solve them also for our tradicional non determinitic friends... we humans.

As Rory Sutherland, arguably the sharpest thinker on the gap between what we measure and what actually matters, likes to point out is be 2026 predictions (the best 20min you can spend today)...The real value isn't what you do, it's how you think.

The agentic era forces us to look at access control from a completely different angle. Not "who has the role" but "what is actually happening right now and does it meet the intent now?

References

- OpenID AuthZEN Authorization API 1.0 — O. Gazitt (Aserto), D. Brossard (Axiomatics), A. Tulshibagwale (SGNL), Eds. OpenID Foundation, January 2026. Final Standard. https://openid.net/specs/authorization-api-1_0.html

- OpenID Shared Signals Framework (SSF) and Continuous Access Evaluation Profile (CAEP) — OpenID Foundation. Enables interoperable streams of real-time security events between transmitters and receivers. https://openid.net/specs/openid-caep-specification-1_0.html

- NIST SP 800-162 — Guide to Attribute Based Access Control (ABAC) Definition and Considerations. Referenced by the AuthZEN specification as a foundational model for the PDP/PEP separation pattern.

- XACML (eXtensible Access Control Markup Language) — OASIS Standard. The original specification defining Policy Decision Points and Policy Enforcement Points, upon which the AuthZEN model builds.